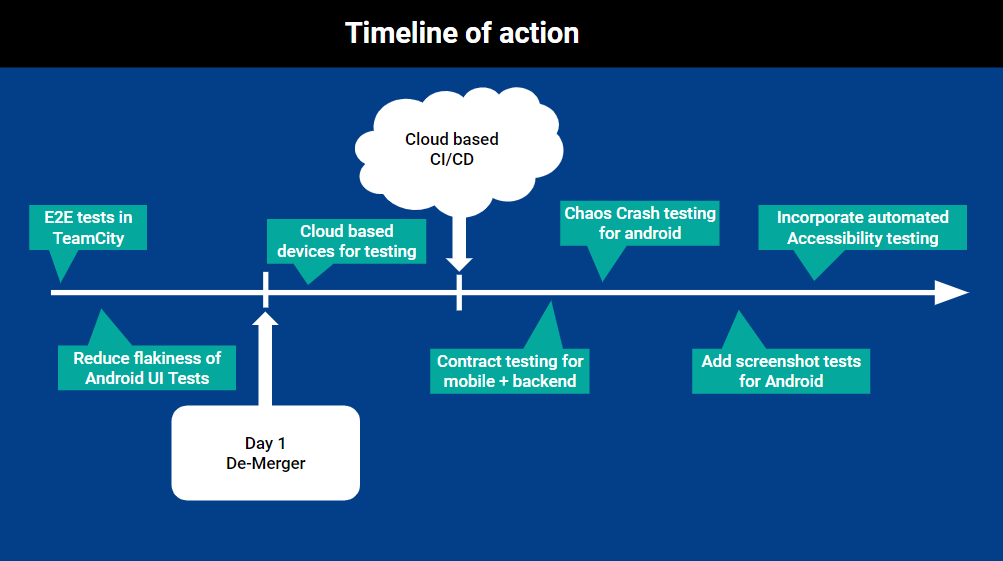

On my previous team we had this mobile app test strategy and gaps we were trying to fill. This blog post is a reflection of those gaps and how we had planned to fill them.

Table of Contents

The TLDR (too long didn’t read)

We had been working on an android and iOS app for over 2 years. We released version 1 in March last year and had been adding new features and iterating on customer feedback since. However we still had some gaps in our test approach. Here’s a summary of those gaps:

- Get our E2E tests working in TeamCity CI/CD

- Reduce flakiness of our UI Android Tests

- Explore using cloud based device test platforms

- Implement contract testing between mobile and backend

- Add crash testing to our CI/CD for android

- Add screenshot tests for Android

- Incorporate some form of automated accessibility testing

Dependencies

We were working for one of Australia’s big four banks but we were in part of the business that was getting sold off soon. Trying to get approval to make our own CI/CD (Continuous Testing/ Continuous Delivery) pipeline changes was hard.

When we were to be sold, we’d have more flexibility to use more tools that suited us. That’s why many of these gaps are dependant on this demerger and we were talking about using GitHub’s CI/CD instead of TeamCity.

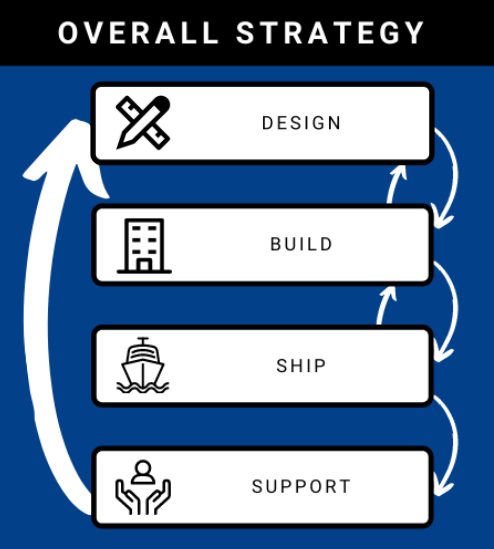

Testing = feedback loops

I like to think about testing in terms of feedback loops. Testing is about getting feedback on the state of the product. To help us answer the question, “are we comfortable shipping this product to customers?”.

When we build feedback loops at the right level we create a giant quality net/filter for our product. By having these feedback loops in place hopefully no major bugs get through to production. We don’t aim to prevent all bugs, that’s impossible.

I like to think of 4 main feedback loops in how we build our app:

- Design

- Build

- Ship

- Support

The Gaps

E2E Tests in Team City

We had originally had a framework written in Java + Appium for E2E tests, however no one else in the team had Java skills. Our backend is written in C#. So we rewrote the framework in C#. This framework was very light weight. It didn’t do any exhaustive testing. All it was meant to test is, “Can every supported app version talk to the current API’s in development?”.

We were only checking if the API returned a response. No business logic was meant to be tested at this level. We still didn’t have these tests running in our CI/CD pipeline yet because of delays with getting it all set up.

Reduce flakiness of Android UI Tests

Ah Android, why do you have to be so flakey? Admittedly we hadn’t investigated much effort in improving these tests just yet but it wouldn’t be worth adding these tests to a CI/CD pipeline if we were just going to ignore the results due to perceived flakiness.

Also our TeamCity agents only had an android 6 emulator installed and it would have been painful to get more emulators installed. If Android tests are flaky on newer versions on Android they are even worse on the older versions.

Cloud Based Device Testing

With our mocking framework built into our mobile app, we could independently test the mobile app without ever talking to an environment. This was *Chefs Kiss*, the best thing to have for the app. Even support and marketing used this feature to support the app.

So when we demerger, we could use a whole plethora of cloud based mobile device testing farms if we wanted to. From AWS’s device farm to browserstack.

Contract testing for mobile

I had used contract testing in a previous team but we weren’t able to build it for this team because we couldn’t get our own pact broker added to our own CI/CD infrastructure. Something about networking.

However we would soon have the option of filling in this gap. We’d have to build a wrapper library for Swift/Kotlin to publish contracts to the broker but we had already been using this for our downstream API’s.

Crash Testing for Android

Using ADB commands and a UI exerciser we could send random UI events to an Android Emulator with the idea of trying to find crashes. The idea being we could run this on a different android version every night for a few hours and over a sprint cycle we’d get some pretty extensive crash testing in place.

Summary

There were a few other gaps we could look to closing; screenshot tests for android and accessibility checking but they weren’t as high priority items to fix and I’m not yet convinced they would be worth as much value as the other items.

Are there any gaps in your current test approach you are trying to fix?

I didn’t work with this google tool but I have some experience with similar amazon test machine. From my experience it might help to find some edge cases but most of the time it did weird things like change user profile on the device (completely not related to the app, but since gestures are random it quite often exited the app during the test). Test ended and created lots of issues for me to investigate only to find out they were unrelated to my project.

Is this Exerciser Monkey is smarter or we need to be careful about this?

The exerciser money is not that smart. It will change user settings randomly too. But it doesn’t stop the test running. It will often bring the app back into the foreground after backgrounding it. It’ll only do random UI interactions on the app itself but will change user settings if the app is in the background (e.g change volume or put the phone into aeroplane mode)